What is Deepfake and How Is It Fooling People?

In layman's terms, deepfake is a combination of face and voice cloning with the help of Artificial Intelligence that allows you to create life-like, computer-generated videos of a real person.

In order to create a quality deepfake video, developers need to accumulate tens of hours of video footage of the person whose voice and face is to be cloned. In addition to this, the human imitator's footage is also required who has mastered the facial mannerisms and voice of the target person. Let's get a bit deeper.

What Are Deepfakes?

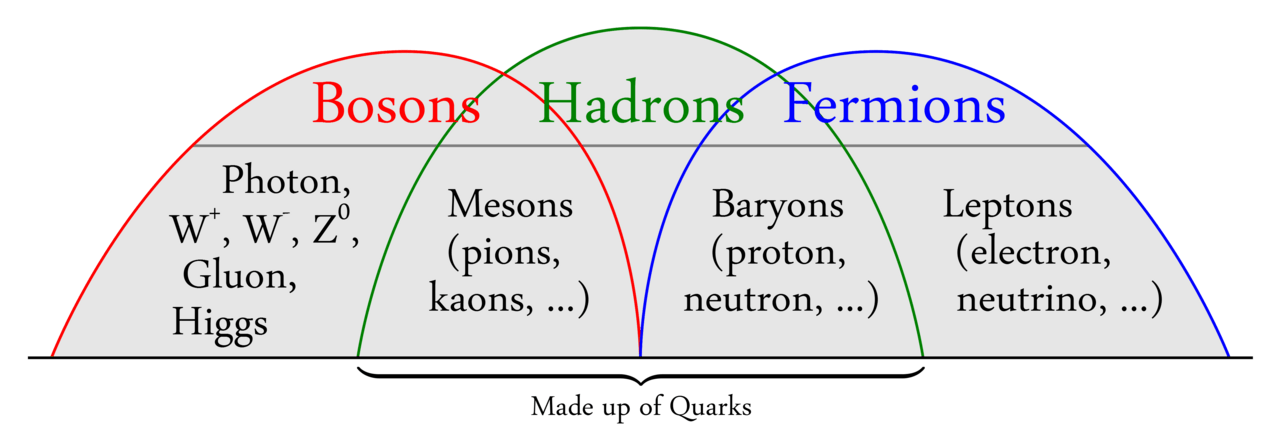

This term is called deepfake because it involves deep learning technology, which is a branch of machine learning. The AI applies neural net simulation to numerous data sets, to create a fake that looks real. AI software amazingly learns what a source face looks like at various angles in order to transpose the face onto a target.

Consider this technology as a mask (a life-like one) on the face of an actor. Over time, the technology has been advanced, and so is AI. Hollywood has used this technology to transpose real or fictional faces onto other actors. A popular example of deepfake is when the director brought Peter Crushing back to life in 2016's Rogue One: A Star Wars Story (more examples given below).

What Are Deepfakes Used For?

The worst part of deepfakes is that most of these are used for pornographic purposes to ruin people's lives. According to an AI firm, Deeptrace, there are 15,000 deepfake videos online (as of September 2019). Among these videos, 96% were pornographic, and 99% of those had mapped faces of female celebrities on to porn stars.

New software and techniques allow people (even the unskilled ones) to create a deepfake with a handful of pictures easily. Due to this, deepfake videos are likely to spread beyond the world of celebrities to take revenge.

A professor of law at Boston University, Danielle Citron, said, "Deepfake technology is being weaponized against women."

Is It Just About Videos?

The answer is NO! Deepfake technology can also create convincing but entirely fictional photos of the target person, and that too from scratch. In addition to videos and pictures, audios can be deepfaked too. Developers can use the technology to create voice clones or voice skins of public figures.

One popular case of deepfake audio was published by The Wall Street Journal. According to them, the chief of a UK subsidiary of a German energy firm transferred around £200,000 into a Hungarian bank account after being phoned by a fraudster who imitated the German CEO's voice and asked the target person to send him the cash urgently.

How Are Deepfakes Made?

Back then, this technology was only used by those who were experts in the field of AI and machine learning. But now, with the advent of apps and easy-to-use software, even the unskilled persons can create deepfakes with ease. Software tools, such as FakeApp and DeepFaceLab, have made a significant impact on this field as it is available to all.

These software tools offer exciting possibilities that range from dubbing, improving and repairing video to solving the uncanny valley effect in video games.

University researchers and many special effects studios have informed us long ago of what's possible with video and photo manipulation. But interestingly, deepfakes were born in the year 2017 when a Reddit user posted doctored porn clips on the site. In those videos, the developer swapped faces of celebrities, including Scarlett Johansson, Gal Gadot, Taylor Swift, and others, on to porn stars.

Making deepfakes involves the following few steps:

- Running several face shots of the two people (the source and the target) through an Artificial Intelligence algorithm called an encoder.

- The encoder then finds and learns similarities between the two faces.

- After finding the similarities, it reduces them to their shared common features, compressing the images during the process.

- A second Artificial Intelligence algorithm known as the decoder is then taught to recover the faces from the compressed images.

How Do You Spot a Deepfake?

Spotting a deepfake is getting harder and harder as the technology improves and evolves. In 2018, researchers from the USA discovered that the deepfake faces don't blink like normal people. This is because the majority of pictures fed to the decoders and encoders show people with their eyes open, and the algorithms aren't able to learn about blinking. However, as soon as this weakness was discovered, the developers worked hard to cover this flaw and soon made videos with normal blinking.

While high-quality deepfakes are harder to spot, the inferior ones are easier to spot. This is because the lip-syncing might be too bad in them, or the skin tone too patchy to look real. Also, there can be flickering around the edges of the target person's face due to poor rendering. In addition to that, fine details, such as hair are complicated to render well.

Universities, governments, and tech firms are all funding research to make something that can detect deepfakes. For this purpose, Deepfake Detection Challenge was introduced to people, which is backed by Microsoft, Amazon, and Facebook. It includes research teams from all around the world competing with each other in the deepfake detection game.

In January 2020, Facebook banned deepfake videos that were likely to misguide people into thinking that someone said words which they didn't actually – in the run-up to the 2020 election.

Popular Examples of Deepfakes

Following are two examples that show the perfect use of deep learning technology to create deepfake videos that might mislead people who aren't aware of AI or related tech.

Donald Trump as Saul in the Show Breaking Bad

While most deepfakes try to fool people, Better Call Trump: Money Laundering 101 is just a parody to make people laugh. This scene is taken from the popular show Breaking Bad, which features Jesse Pinkman and Saul Goodman (as Donald Trump).

In the scene, Saul Goodman explains Jesse Pinkman the basics of money-laundering. To add a touch of realism, they also transposed the face of Jared Kushner (Son-in-Law of Donald Trump) on Jesse's face, making the scene an almost perfect heart-to-heart.

This deepfake video was created by YouTube creator Ctrl-Shift Face that used DeepFaceLab to deepfake Trump and Kushner's faces frame by frame. The voices were provided by Stable Voices, a custom AI model that is designed to be trained on real speech samples.

Obama's Public Service Announcement

The most convincing deepfakes have been created using impersonators who are capable of mimicking the source's gestures and voice. And this one is the best example of such videos. Produced by BuzzFeed, it features actor and comedian Jordan Peele. The developers used FakeApp and Adobe After Effects CC to create the deepfake video. They transposed Peele's mouth over Obama's and replaced his jawline with one that followed movements of Peele's mouth.

Finally, FakeApp was used to refine the footage through over 50 hours of automatic processing.

Celebrities and politicians are the most common victims of this technology. Less than a year ago, before the above video was made, the computer scientists at the University of Washington also used network AI to create the shape of Obama's mouth. They then adjusted it to lip-synch to the audio input.

Final Verdict – Actions Being Taken Against Deepfakes

Deepfakes are likely to do more harm than good. U.S. House of Representatives' Intelligence Committee sent a letter to Google, Twitter, and Facebook asking how they and other social media sites have planned to fight deepfakes in the 2020 election.

In addition to this, Congress requested the Director of National Intelligence to make them a formal report on deepfake technology.

Government institutions like DARPA, along with several other educational institutes, including the University of Washington, the Max Planck Institute for Informatics, Carnegie Mellon, and Stanford University are also experimenting with deepfake technology. These institutes are looking for how to use GAN technology as well as how to combat it.

They want to make an AI-based algorithm that can detect if something is deepfake. Let's hope that they soon develop a tool using deep learning that can identify modified areas in images and videos. So, one can easily know if it is a legit video or a deepfake.